Evaluating Emerging Technological Trends and the Convergence of Artificial Intelligence and Graphics Innovation

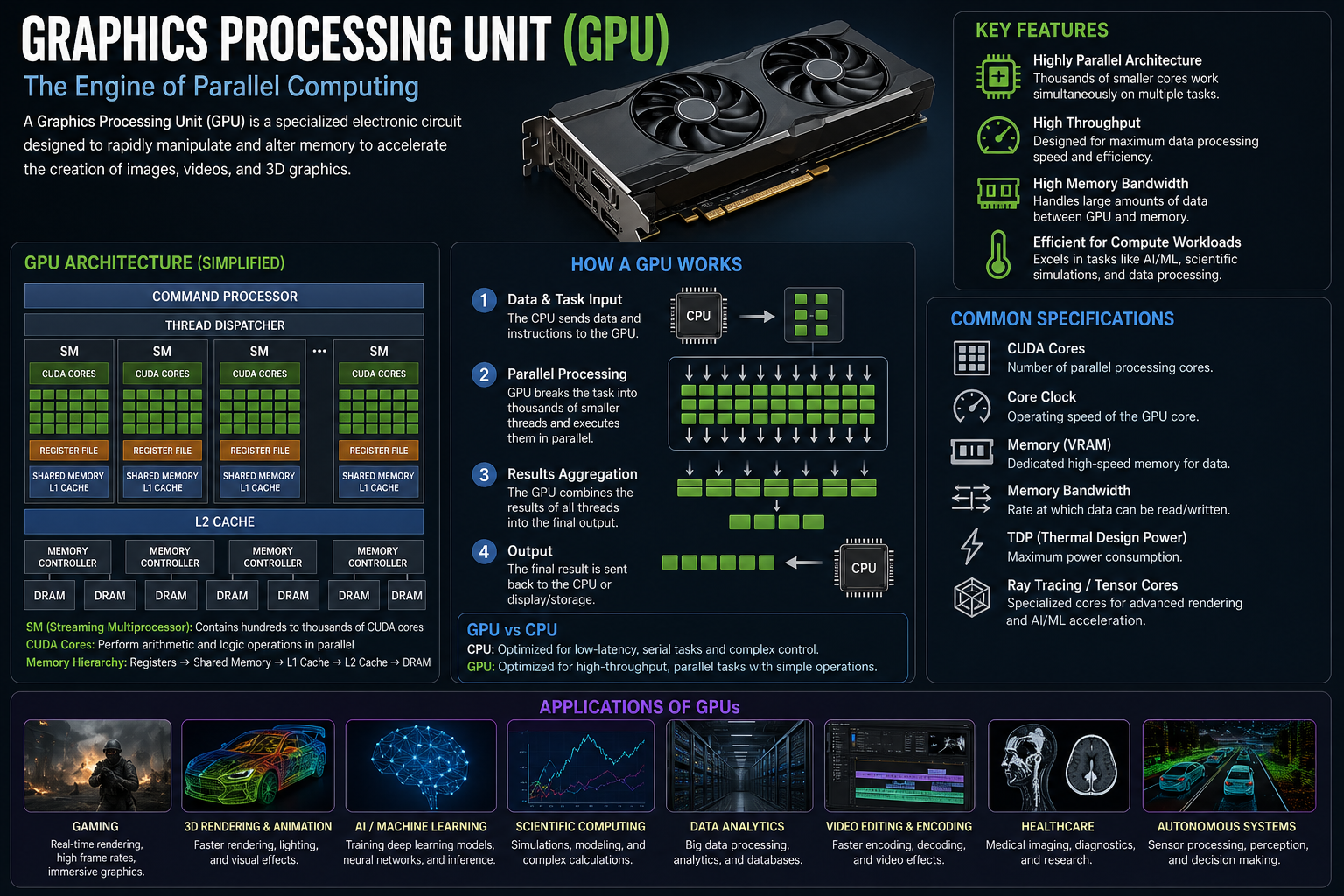

The most significant development currently shaping the visual computing industry is the deep, structural integration of artificial intelligence capabilities directly into hardware architectures, a shift that is fundamentally redefining what a graphics processor actually does. We have moved well beyond the era where GPUs were used merely to render triangles; today, they are intelligent, multi-purpose accelerators that use neural networks to denoise, upscale, and interpolate graphics in real-time. This trend, coupled with the rising integration of deep learning libraries directly into the hardware-software stack, is creating a new generation of Graphics Processing Unit Market trends that emphasize software-defined flexibility and long-term upgradeability. This paradigm shift means that GPUs are increasingly being optimized for the "inference-era," where the ability to efficiently run pre-trained models is as critical as the raw horsepower needed to train them.

This convergence is being felt most acutely in the gaming and interactive media sectors, where users now expect console-grade fidelity and performance across a wide array of devices, from desktops to cloud-streamed portable platforms. To deliver these experiences, hardware manufacturers are betting heavily on sophisticated neural rendering techniques that can bridge the performance gap caused by high-resolution display requirements. However, this level of innovation brings its own set of challenges, particularly in the realm of power management and heat dissipation. As processors become more complex, the thermal limits of traditional cooling systems are being pushed to the brink, forcing engineers to explore more exotic cooling solutions and more efficient logic designs. Looking ahead, the ability to seamlessly integrate AI-driven intelligence into every part of the graphics pipeline will remain the defining characteristic of leading hardware designs, ensuring that these processors remain the essential enablers of the next generation of digital, interactive experiences.

FAQs

-

What is "inference-era" hardware? It refers to silicon specifically optimized to run (or "infer") AI models after they have already been trained, focusing on latency, cost-efficiency, and energy savings rather than sheer training speed.

-

Why is thermal management becoming a bigger challenge for GPU manufacturers? As processors get more powerful and dense, they generate more heat in a smaller area, requiring advanced cooling solutions (like liquid cooling) to prevent performance throttling or hardware damage.